The Space Between Cause & Effect

The following is reproduced with permission from Cinema, a former Twitter personality & blogger who left the social media world. The reproduction is intended to preserve & share insightful posts from Cinema's blog. This post was originally published in October 2014.

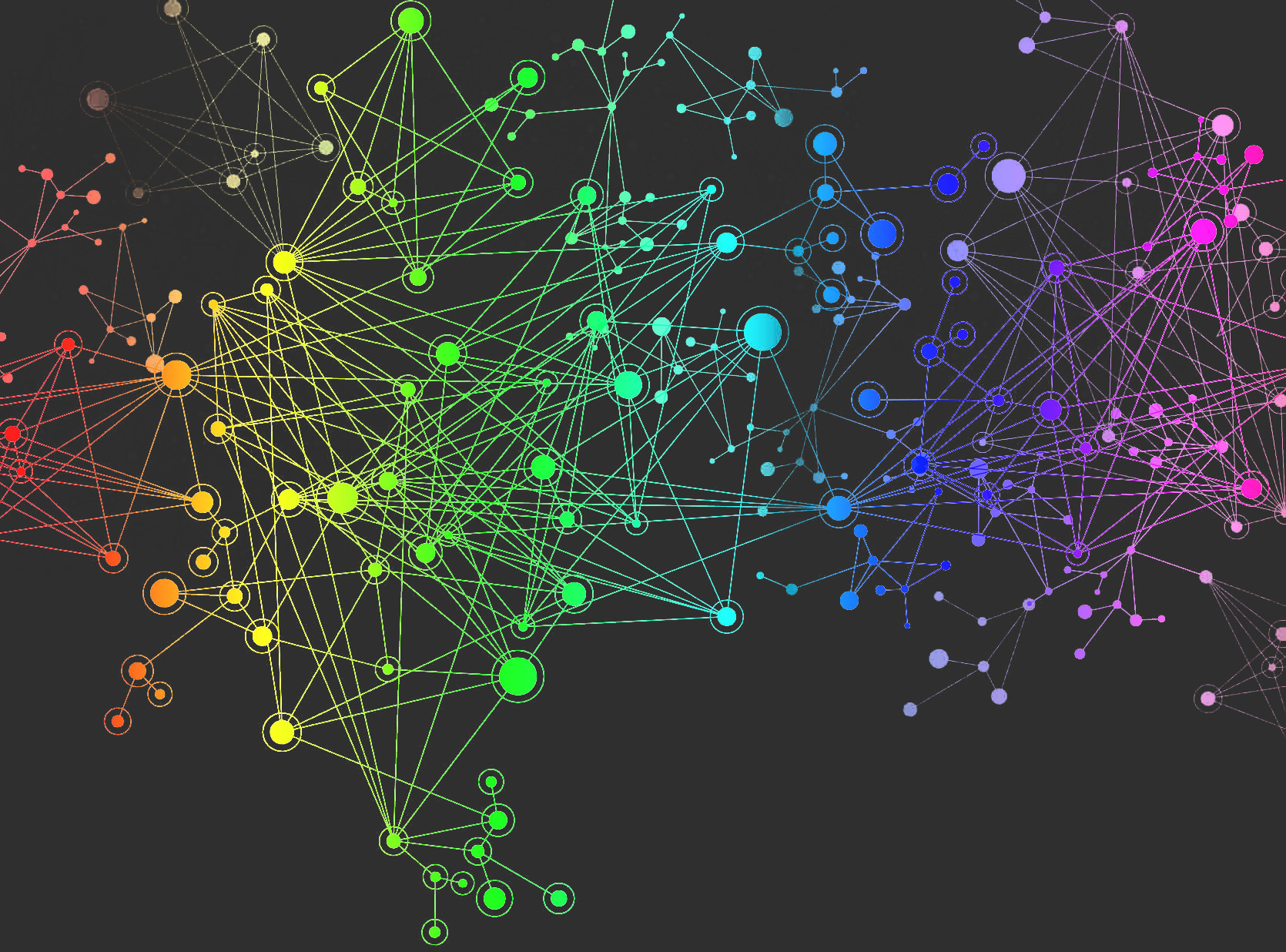

We often find ourselves hooked on finding straightforward explanations for why things turn out the way they do. Sometimes we’re right, but other times explanations can be difficult to forge despite the manifestation of its effects. This seemingly straight arrow of Cause and Effect can get complicated pretty quickly in a Complex System. From Michael Mauboussin’s Think Twice:

Humans have a deep desire to understand cause and effect, as such links probably conferred humans with evolutionary advantage. In complex adaptive systems, there is no simple method for understanding the whole by studying the parts, so searching for simple agent-level causes of system-level effects is useless. Yet our minds are not beyond making up a cause to relieve the itch of an unexplained effect. When a mind seeking links between cause and effect meets a system that conceals them, accidents will happen.

We seem to be obsessed with offering explanations and acting on them. Foregoing explanatory theories while obtaining the benefits of the process is not in vogue…but it might be useful when sifting through our data-driven age. From Taleb’s Antifragile:

For a theory is a very dangerous thing to have.

And of course one can rigorously do science without it. What scientists call phenomenology is the observation of an empirical regularity without a visible theory of it…. Theories are superfragile; they come and go, then come and go, the come and go again; phenomenologies stay, and I can’t believe people don’t realize that phenomenology is “robust” and usable, and theories, while overhyped, are unreliable for decision making – outside physics.

Taleb later continues:

We are built to be duped for theories. But theories come and go; experience stays. Explanations change all the time, and have changed all the time in history (because of causal opacity, the invisibility of causes) with people involved in the incremental development of ideas thinking they always had a definitive theory; experience remains constant.

… Take for instance the following statement, entirely evidence-based: if you build muscle, you can eat more without getting more fat deposits in your belly and can gorge on lamb chops without having to buy a new belt. Now in the past the theory to rationalize it was: “Your metabolism is higher because muscles burn calories.” Currently I tend to hear “You become more insulin-sensitive and store less fat.” Insulin, shminsulin; metabolism, shmetabolism: another theory will emerge in the future and some other substance will come about, but the exact same effect will continue to prevail.

The same holds for the statement Lifting weights increases your muscle mass. In the past they used to say that weight lifting caused the “micro-tearing of muscles,” with subsequent healing and increase in size. Today some people discuss hormonal signaling or genetic mechanisms, tomorrow they will discuss something else. But the effect has held forever and will continue to do so.

Mauboussin (again from Think Twice) offers some actionable points (in bold, followed my paraphrasing of his explanations) on how to improve the odds of making better decisions in complex systems.

1. Consider the system at the correct level. Basic idea here is to differentiate from examining the trees from an examination of the forest. If you want to study the forest (system), then study it at the level of the forest as a system instead of getting seduced by the intricacies of the trees. Consider the levels of organization and study the level of organization that you wish to influence. Systemic versus Reductionist.

2. Watch for tightly coupled systems. The more diverse the system, the better the wisdom of the crowd. With a reduction in diversity the system is much more susceptible to influences between the components of the system. The system may tend to couple together in these circumstances; therefore, it might be worth watching.

3. Use simulations to create virtual world. This one seems straight-forward: simulate the scenario to test the strength of your ideas and pull lessons that may apply to the complex system you want to engage. This one (I think) is a bit tricky since the real world and a virtual/simulated world might not level out on the layers of complexity.

Are you too attached to theories?

My Quarterly Letter summarizes my favorite books read in the last quarter. If you'd like to join the growing list, then you can let me know here.